AI Search

Where Does Google AI Get Its Information? (2026)

Google AI pulls from 4 distinct sources: web crawl, Knowledge Graph, live SERP citations, and Gemini training data. Each has different freshness and levers.

AI Search

Google AI pulls from 4 distinct sources: web crawl, Knowledge Graph, live SERP citations, and Gemini training data. Each has different freshness and levers.

Google AI is not one model with one memory. It is a stack of four information sources stitched together at query time. The live web crawl and search index handle freshness (hours to days). The Knowledge Graph handles entities and relationships (weeks). AI Overviews synthesizes a per-query answer from 4-7 retrieved sources (real-time). Gemini's training corpus handles background knowledge (frozen until the next model). Three of the four are influenceable today; one is locked until Google ships a new Gemini generation. Most operators only optimize for one of them and wonder why their brand shows up wrong in the other three.

| Source | Update cadence | Primary lever |

|---|---|---|

| Live web crawl + search index | Hours to days | Indexable content + crawl-budget signals |

| Knowledge Graph | Weeks (4-12 lag typical) | Organization / Person schema + Wikidata + sameAs |

| AI Overviews citations | Real-time, per query | Top-10 organic rank + Direct Answer + question H2s |

| Gemini training corpus | Every 6-12 months (model-cycle) | Google-Extended robots.txt + index well before training cutoff |

| Citations per AI Overview block | 4-7 sources | — |

| Google-Extended user agent introduced | September 2023 | — |

| AI Overviews appearance rate (US English Q1 2026) | 13-15% | — |

I have spent the last six months watching this 4-way split play out on attrifast.com and three client properties. A common confusion among operators: assuming "Google AI" means Gemini, and that influencing Gemini means waiting for the next training run. That misses the three faster surfaces. Most of the wins on AI Overviews citations and Knowledge Panel updates land inside a quarter; only the deep training-corpus answers run on annual cycles.

When someone asks "where does Google AI get its information?", they are usually conflating four different systems Google runs in parallel. Each surface in the Google ecosystem (classic SERP, AI Overviews, AI Mode chat, Knowledge Panels, Google Lens, the Bard-now-Gemini chatbot) draws from a different mix of the four.

The four sources, listed by update speed:

Live web crawl + search index — Googlebot crawls hundreds of billions of pages on a rolling basis, updating the canonical search index that classic blue links draw from. The crawl frequency varies roughly 1000x between high-authority news sites (hours) and long-tail blogs (weeks), per Ahrefs Googlebot research.

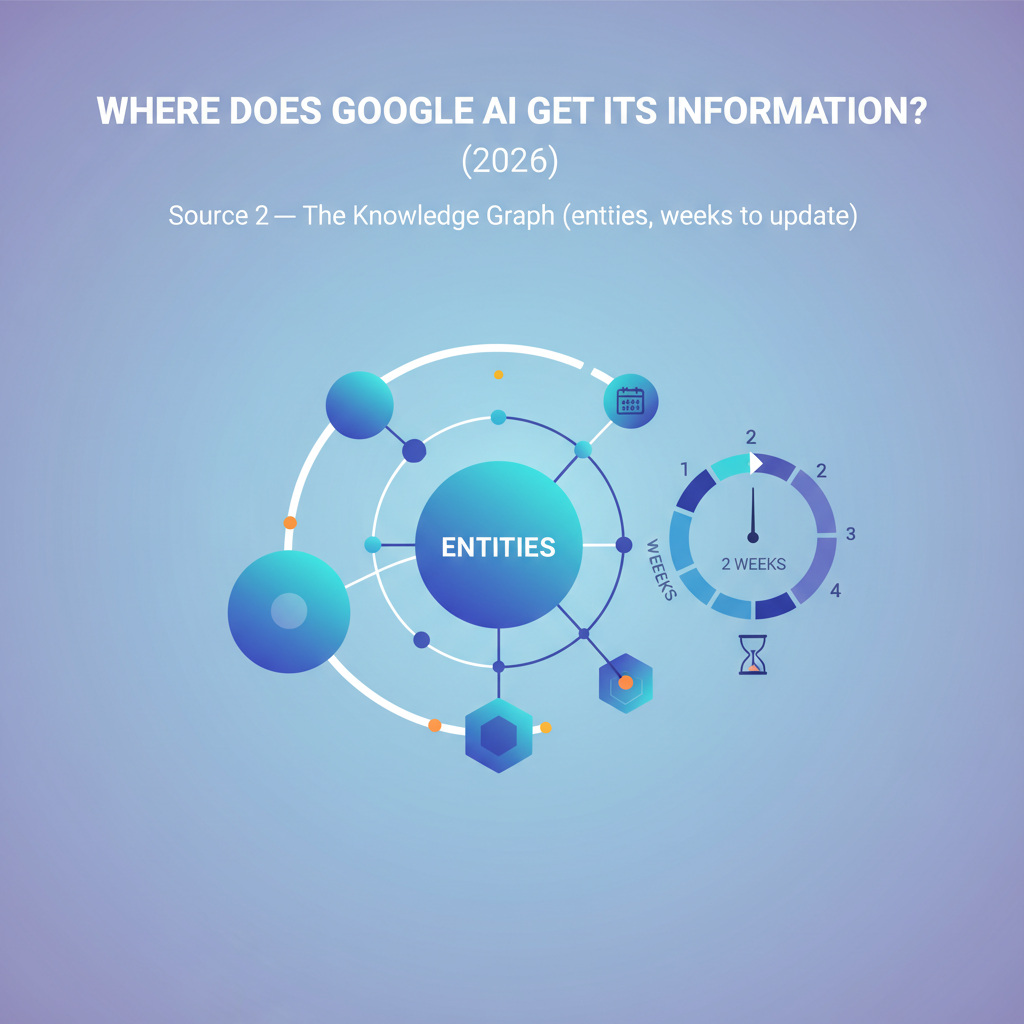

Knowledge Graph — A structured database of entities (people, companies, places, products, concepts) and the relationships between them. Updates from new web data, schema.org markup, Wikidata, and editorial curation. The lag from schema deploy to a visible Knowledge Panel change is roughly 4-12 weeks based on industry observation; Google does not publish a target.

AI Overviews real-time citations — When an AI Overview appears on a SERP, Google synthesizes the answer at query time from 4-7 sources pulled from the live index. The synthesis itself uses Gemini, but the factual claims come from pages indexed today, not from training data.

Gemini training corpus — The frozen text-and-image data Gemini learned from during pre-training. Per Google's Gemini API model cards, the Gemini 2.5 family has a training cutoff in early 2025. Anything published after that cutoff is invisible to the model's background knowledge until the next generation ships.

The diagram captures something most playbooks miss: AI Overviews depends primarily on Source 1 (the live index) for facts, with Source 4 (training data) doing the prose-shaping. That is why a brand-new article can show up in an AIO citation within days but cannot show up in a no-browsing Gemini chat answer for months.

A short caveat. Google does not publish the exact composition of any of these systems. What follows is inferred from Google's published documentation, the schema.org standard, and observable behavior across our own measurement. The full training corpus composition is not publicly disclosed in detail — that is one of the things to live with when working in this niche.

This is the oldest and largest of the four. Googlebot crawls the public web on a rolling basis and writes pages into the search index. Both classic SERP and AI Overviews retrieval rely on this index — when AIO picks 4-7 sources to cite for a query, it picks from the same index that ranks the blue links.

The crawl mechanics, per Google's crawler documentation:

| Crawler | Purpose | Respects robots.txt? |

|---|---|---|

| Googlebot | Standard search index (mobile + desktop) | Yes |

| Googlebot-News | News surfaces (faster cadence) | Yes |

| Google-Extended | Gemini training + Bard | Yes (separate user agent) |

| GoogleOther | Internal R&D | Yes |

| Google-InspectionTool | Search Console / Rich Results test | Yes |

The crawl-frequency variance is the part most operators underestimate. A site like nytimes.com or stripe.com sees Googlebot multiple times a day. A low-authority blog might see it every 2-4 weeks. The 1000x variance comes from authority signals (backlink graph, prior crawl-to-index conversion rate, server response time, and the broader helpful-content classifier signals).

What this means in practice. If you publish a new article tonight, the time-to-citation in AI Overviews depends almost entirely on how often Googlebot already visits your domain. Sites that already rank well for adjacent queries get re-crawled within 24-72 hours of a new publish; sites starting from zero authority may wait weeks. That 24-72 hour figure is a directional estimate from observing our own and client publications — not a guarantee, and not a published Google SLA.

The lever for Source 1 is straightforward: ship indexable content (no noindex, fast TTFB, clean canonical), build the kind of links and engagement that move Googlebot to crawl you more often, and make sure structured data is present so the index understands what each page is. The which backlinks drive revenue analysis covers what kind of links actually move ranking versus what just looks impressive.

The Knowledge Graph is Google's structured entity database. When you search "attrifast" and see a panel on the right with the founder's name, the company description, and links to social profiles, that comes from the Knowledge Graph, not from the live web index.

Knowledge Graph entities come from three feeds, in roughly this order of trust:

Organization, Person, Product, LocalBusiness schema with sameAs links pointing at matched external profiles.Per Google's Knowledge Panel documentation, Knowledge Panel verification and updates run on Google's own cadence — not a published SLA. Industry pattern across the operators I have worked with: a new Organization schema deploy with strong sameAs starts showing in Knowledge Graph entity references in roughly 4-12 weeks. Some land in days; some never land at all because the entity is too new or ambiguous. Hedge it as a directional estimate, not a guarantee.

The sameAs property from schema.org is the linchpin. It is the explicit signal that says "this entity at URL A is the same entity at URL B." Without it, Google has to infer entity-merging from text patterns. With it, the merge is declared.

A worked example for a SaaS brand:

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@type": "Organization",

"@id": "https://yoursite.com/#organization",

"name": "Your Brand",

"url": "https://yoursite.com",

"sameAs": [

"https://www.linkedin.com/company/yourbrand",

"https://twitter.com/yourbrand",

"https://github.com/yourbrand",

"https://www.crunchbase.com/organization/yourbrand"

]

}

</script>

This is the schema bundle that gets a new brand into the Knowledge Graph fastest. The full GEO playbook with Person + Article + FAQPage schema lives in the how to get cited by AI engines deep-dive. For brands that already have a Knowledge Panel but show stale info, the fix path is different: update the schema, file a Knowledge Panel correction request via Google's official correction flow, and wait.

The Knowledge Graph is also the source that fuels disambiguation when Gemini answers a question about a named entity. If Gemini knows "Attrifast" is the SaaS founded by Vincent Ruan (via Knowledge Graph), it will phrase answers about Attrifast differently from a model that has never heard the name. That feeds back into Source 4.

This is the most-talked-about and most-misunderstood of the four. When an AI Overview appears at the top of a Google SERP, the 4-7 cited sources are not pulled from Gemini's training corpus. They are retrieved from the live search index in real time, at the moment the user types the query.

The flow, per Google's documentation of generative AI in Search:

The key point. Source 3 is fundamentally a retrieval system on top of Source 1. The Gemini model handles the synthesis and the phrasing; the facts come from pages indexed in the last hours or days. That is why citation can update within a 24-72 hour window for fresh content on well-indexed domains. We get into the citation mechanics in more depth in the AI Overviews ranking and citation breakdown.

The five signals that move citation likelihood, observed across Ahrefs and Semrush 2025 research on AIO:

Notice how Source 3 leans on signals from Source 1 (rank) and Source 2 (schema + sameAs). The three are not independent surfaces — they compound. A page that has all five signals is cited dramatically more often than a page with one or two.

One honest hedge that bears repeating. The 24-72 hour citation window is observed pattern across our own publications and client sites, not a Google-published metric. On a low-authority domain with weak crawl signals, the same article might take 2-3 weeks to appear in an AIO citation. The window is bounded by Source 1's crawl cadence on your specific domain, not by an AIO-specific clock.

Source 4 is the slowest of the four and the only one without a fast lever. Gemini's training corpus is the text and image data the model learned from during pre-training. Once a model generation ships, that corpus is frozen until Google trains a new generation.

Per Google's Gemini API model documentation, each Gemini generation has a published training cutoff. The Gemini 2.5 family has a cutoff in early 2025. Anything published after that cutoff is invisible to Gemini's base-model knowledge — the model literally does not know it exists — unless one of three things happens:

The training-corpus composition is not publicly disclosed in detail. What Google has published: the corpus draws from large-scale public web text, code repositories, books, and curated multimodal data. Much of the composition is inferred from observable behavior — what Gemini "knows" suggests what it was trained on, but the exact mix and weights are not in public docs.

Two levers exist, both indirect:

Lever 4a — Google-Extended opt-out. Google introduced the Google-Extended user agent in September 2023 specifically so publishers can opt out of Gemini training while still allowing classic Googlebot indexing. Add to robots.txt:

User-agent: Google-Extended

Disallow: /

This blocks training-data scraping but does not block search-index crawling. Use it only if you actively want to be invisible to Gemini's background knowledge — the more common scenario for SaaS brands is the opposite (you want Gemini to know you exist).

Lever 4b — Index well before the next training cutoff. This is the inverse: make sure your content is in Source 1 and Source 2 well before Google freezes the next training generation, so it gets included. Cutoffs are not announced in advance, but the pattern across Gemini generations has been roughly every 6-12 months. If you ship a major positioning change or a new product, you want the canonical pages indexed and the Knowledge Graph entity stable for at least 3-6 months before any plausible next-generation cutoff.

A specific failure mode worth naming. We launched the attrifast Premium tier on 2026-05-09. If you ask a no-browsing Gemini chat session today (2026-05-10) "what does Attrifast offer?", it will likely answer with the old Pro-only positioning, because the Premium launch postdates the Gemini 2.5 training cutoff. Source 1, Source 2, and Source 3 will start reflecting the change within weeks; Source 4 will not refresh until the next Gemini generation ships. There is no fast fix for that gap. You just live with it.

For the operator-side angle on this — what Google actually knows about a person or a brand at any given moment — the does Google know everything breakdown covers the full identity-surface mechanics, not just the AI half.

Side-by-side, the four sources look like this:

| Dimension | Source 1: Live Index | Source 2: Knowledge Graph | Source 3: AI Overviews | Source 4: Gemini Training |

|---|---|---|---|---|

| Update cadence | Hours to days | Weeks (4-12 lag typical) | Real-time, per query | Every 6-12 months |

| Primary lever | Indexable content + crawl signals | Org/Person schema + Wikidata + sameAs | Top-10 rank + Direct Answer + schema | Google-Extended robots.txt; index pre-cutoff |

| Time-to-impact | 24-72 hours (well-indexed sites) | 4-12 weeks (industry observation) | 24-72 hours (same as Source 1) | Until next model generation ships |

| Influenceable today? | Yes | Yes | Yes | Indirectly |

| Powers which surfaces? | Classic SERP, AIO retrieval | Knowledge Panels, entity disambiguation | AIO blocks, AI Mode | Gemini chat (no-browse), background knowledge for AIO synthesis |

| Citation visibility | Yes (you see ranking) | Yes (Knowledge Panel) | Yes (inline citation) | No (synthesized, no attribution) |

The table is the practical map. Three of the four surfaces give you measurable feedback (you can watch rank, watch the Knowledge Panel, watch AIO citations). The fourth is a black box — you cannot see when Gemini's background knowledge updates because the model does not surface citations for training-derived claims.

Time-to-impact, log scale (hours → years)

Source 1: Live index |█▌ | ~24-72h

Source 3: AIO citation |█▌ | ~24-72h (depends on Source 1)

Source 2: Knowledge Graph | █████████ | ~4-12 weeks

Source 4: Gemini training | ████████████| ~6-12 months (next gen)

1h 1d 1wk 1mo 6mo 1yr

Most operators want one of four outcomes from Google AI. Each maps to a different lever.

The decision tree captures one thing the comparison table does not: the four paths have different prerequisites. The Source 3 path requires you to already have ranking strength on the target query. The Source 2 path requires entity-disambiguation discipline. The Source 4 path requires patience and a head-start before the next training cutoff lands. None of them is hard individually; the hard part is recognizing which one applies to your actual goal.

For a SaaS brand that has shipped a positioning change in the last 90 days, you almost certainly want the hybrid path (F). Source 1 will reflect the change in days, Source 2 in weeks, Source 3 will start citing the new positioning within the same window — and Source 4 will lag until the next Gemini generation. The lag is unfixable; the only thing that matters is making sure 1, 2, and 3 are correct so that grounded surfaces (AI Mode, AIO) tell the right story even while the no-browsing Gemini chat is stale.

The honest part. None of the four sources is currently measurable end-to-end inside GA4.

GA4 lumps AI Overviews citation clicks as Direct/(none) — Referer headers are stripped, no UTM parameters are appended, and the destination URL is your canonical URL. Per our breakdown of GA4 attribution limitations, the same gap applies to ChatGPT and Perplexity referrals, with one difference: Perplexity usually preserves the Referer, ChatGPT often does not, AI Overviews almost never does.

What you can measure today, surface by surface:

The piece GA4 cannot do — joining an AI-referral click back to a Stripe payment so you know which Google AI surface actually moved revenue — is the gap that motivated the attrifast first-party tracking architecture. A 4KB cookieless script drops a first-party session ID on the landing page, scoped to your own domain so ITP and Total Cookie Protection do not touch it. A Stripe webhook handler joins the session back to a checkout.session.completed event server-side, which means the join works even across the days-to-weeks gap between visit and conversion. The same architecture is documented in more depth on the revenue attribution feature page.

I will not publish per-source RPV numbers yet. We have not shipped the AIO-specific detection layer on attrifast.com yet, and the SaaS analytics niche is full of fabricated case-study figures. The architecture is the part that is publishable today; the numbers are 90 days out.

Google AI is not a single system. It stitches together four distinct sources: (1) the live web crawl and search index that Googlebot updates hours-to-days fresh, (2) the Knowledge Graph of entities and relationships, which updates on a scale of weeks, (3) real-time AI Overviews citations synthesized at query time from top SERP results, and (4) Gemini's frozen training corpus, which only refreshes when Google ships a new model. Each source has a different freshness and a different lever for influencing what Google AI knows about you.

Mostly no. Per Google's published documentation on AI features in Search, AI Overviews are synthesized at query time from the same web index that powers the classic SERP. The Gemini model contributes the language synthesis and reasoning, but the factual content comes from the 4-7 cited sources retrieved fresh for each query. Training data influences how Gemini phrases the answer and what background knowledge it brings to disambiguation, but the citable facts come from the live index. This is also why citations can update within 24-72 hours of new content publication, while training-data answers are frozen until the next model generation.

Three signals move the needle: Organization schema with sameAs links pointing at 4+ matched identity profiles (LinkedIn, X, GitHub, Crunchbase), a Wikidata entry (which often seeds Knowledge Graph entries), and consistent name-address-phone or name-URL pairs across high-trust mentions. The lag from schema deploy to a visible Knowledge Panel update is roughly 4-12 weeks based on industry observation, not a guarantee. The full mechanics live in our get cited by AI engines deep-dive.

Yes, partially. Google introduced the Google-Extended user agent in September 2023 specifically so publishers can disallow training-data scraping while still allowing classic Googlebot indexing for search. Add User-agent: Google-Extended plus Disallow: / to your robots.txt and Gemini will not train on the disallowed paths. The catch: opting out also reduces the chance that Gemini ever learns your brand exists as background context for future answers. Most operators should opt in for visibility and accept the tradeoff.

Because three of the four sources can update quickly but the fourth (Gemini training data) cannot. If Google AI is repeating a pricing claim, a feature description, or a positioning line that is no longer true, it almost certainly comes from training data that froze before your update shipped. Levers to fix it: get the corrected information into the live index (so AI Overviews can cite the fresh version), update Wikidata if there is an entry, and wait. Gemini training cutoffs are roughly every 6-12 months, so the frozen claim will refresh on the next model generation.

Discover which marketing channels bring customers so you can grow your business, fast.

Start free trial →5-day free trial · $29/mo · cancel anytime