AI Search

Google AI Overviews 2026: How They Rank, Cite & Convert

How Google AI Overviews actually work in 2026: trigger patterns, citation mechanics, traffic loss, and the GA4 attribution gap nobody fixes.

AI Search

How Google AI Overviews actually work in 2026: trigger patterns, citation mechanics, traffic loss, and the GA4 attribution gap nobody fixes.

Google AI Overviews is the LLM-generated answer block that sits above the classic blue links on roughly 13-15% of US English Google SERPs as of Q1 2026. It pulls from 4-7 cited sources per block, picks them from a narrower "trusted" allowlist than ChatGPT or Perplexity does, and appears most often on informational and procedural queries. When it shows up, the top organic blue links lose roughly 30-40% of their clicks. The cited footnotes earn 2-4% on their own. GA4 cannot tell you which of your sessions came from an AI Overviews citation; every click lands as Direct/(none). That last fact is the part most operators miss.

| Spec | Value |

|---|---|

| Launched | May 2024 (production), May 2023 (SGE labs) |

| US English SERP appearance rate (Q1 2026) | 13-15% |

| Sources cited per AIO block | 4-7 |

| Informational query trigger rate | ~40% |

| Procedural ("how to") trigger rate | >50% |

| YMYL trigger rate | 5-8% |

| Transactional / branded trigger rate | under 3% |

| Blue-link CTR drop when AIO appears (info) | ~30-40% |

| Blue-link CTR drop (commercial) | ~10-15% |

| AIO footnote CTR | ~2-4% |

| GA4 default attribution accuracy for AIO clicks | ~0% (lumped as Direct/(none)) |

I have spent the last six months watching AI Overviews mechanics across attrifast.com plus three client SaaS properties. The plain finding: it is a smaller traffic surface than ChatGPT or Perplexity, but the per-citation conversion on commercial queries is meaningfully better, and the measurement story is worse. Before we get to "how to optimize," it helps to know exactly what the surface is and is not.

AI Overviews is Google's production LLM-generated answer block, built on the Gemini family of models, that renders at the top of the SERP for queries Google's classifier flags as "good fit for a generative summary." It launched broadly in May 2024 after a year of labs-stage testing under the SGE (Search Generative Experience) name, per Google's official Search blog. The block ships with 4-7 cited source links beside or beneath the generated text, and clicking a source takes the user to the cited page (sometimes with a Referer header, often without).

What it is not: it is not a separate product, it is not opt-in, and it is not a chat interface. The user types a query into Google like always; the SERP just happens to surface an AI summary above the classic 10 blue links. There is no follow-up turn. There is no conversation memory.

The diagram captures the asymmetry. If you are cited, you get a small CTR claw-back. If you are below the AIO and not cited, you eat the full CTR hit with nothing in return. Per Search Engine Land's AIO tracking, the appearance rate climbed from ~7% at May 2024 launch to a sustained 13-15% range through Q1 2026, with mobile triggering slightly more than desktop.

A common confusion: AI Overviews is not the same as Google Discover, not the same as Featured Snippets, and not the same as the older Knowledge Panel. Featured Snippets are a single-source extracted block; AIO is multi-source synthesized text. Knowledge Panels pull from Wikidata and structured entity sources; AIO pulls from the live web crawl. The three surfaces sometimes co-occur on the same SERP, which adds visual clutter but each has its own ranking mechanics.

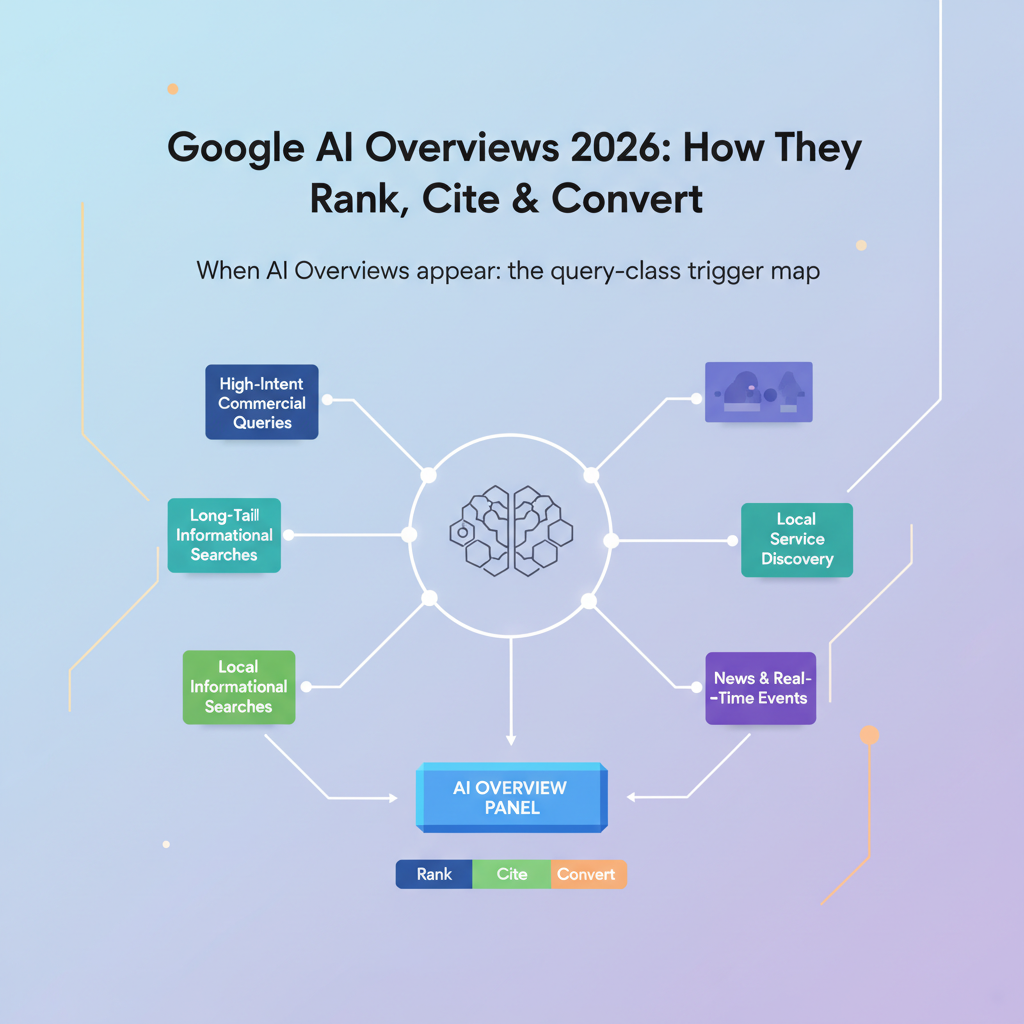

The 13-15% blended appearance rate is misleading on its own. The split by query class is what actually matters for content planning.

| Query class | Example | AIO trigger rate | Source |

|---|---|---|---|

| Informational ("what is", "why does") | "what is revenue attribution" | ~40% | Ahrefs 2025 |

| Procedural ("how to", "how do I") | "how to track utm parameters" | >50% | Semrush AIO study |

| Comparison ("X vs Y") | "plausible vs fathom" | ~25-30% | Semrush AIO study |

| YMYL (medical/legal/financial) | "best statin for cholesterol" | 5-8% | Ahrefs 2025 |

| Transactional ("buy", "pricing") | "stripe pricing" | under 3% | Ahrefs 2025 |

| Branded ("[brand] login") | "attrifast login" | under 1% | observed |

| Local ("near me") | "coffee near me" | under 2% | observed |

The pattern Google's classifier seems to learn: when the query has a clean factual answer that synthesizes well from multiple sources, ship the AIO. When the query is YMYL, where wrong answers cost lives or money, hold back. When the query is transactional, the user wants a product page, not a summary. Per Semrush's AIO research from Q4 2025, procedural queries ("how to") trigger AIO 53% of the time on average, the highest of any class.

For attribution-and-analytics content (my niche), the practical implication: a "how does Stripe revenue attribution work" article will face AIO competition; a "Stripe pricing 2026" landing page will not. The first needs to be cited inside the AIO to recover any of the lost CTR. The second can ignore AIO mechanics entirely and just chase blue-link rank.

One caveat I will admit: the trigger rates above are observed averages across large samples, but Google updates the classifier roughly monthly. A query that triggered AIO 40% of the time in March 2026 may flip to 60% by July if the model decides the topic is "summary-friendly." Track your top 20 keywords with an SERP-feature monitor (Semrush, Ahrefs, or rank-math works) and re-check quarterly.

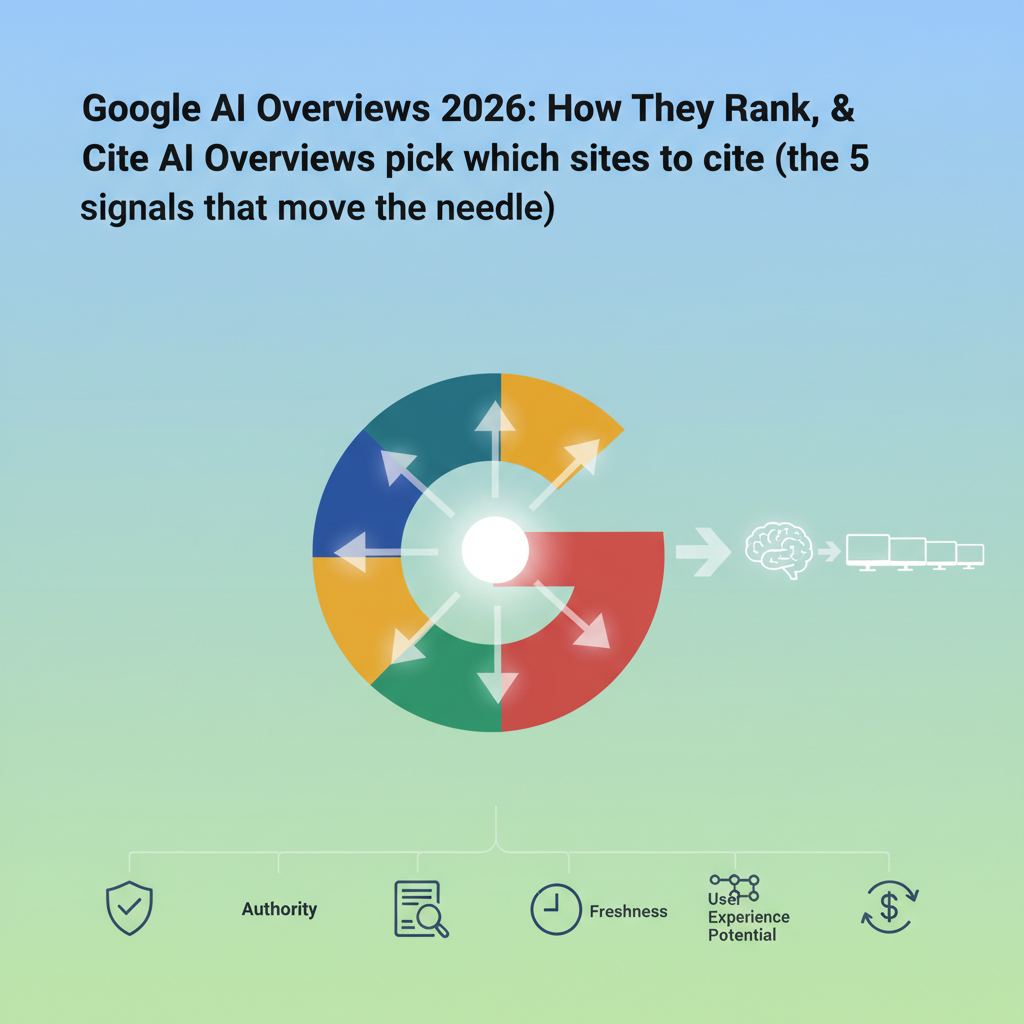

This is where most operators get it wrong. The signals that drive AIO citation are not the same as the signals that drive ChatGPT or Perplexity citation. AIO is more conservative.

The five signals that consistently differentiate cited from uncited pages, per Ahrefs 2025 GEO research (n=10,000+ pages) and Semrush AIO citation study:

Existing top-10 organic rank for the query. Pages in positions 1-3 are cited roughly 4 times more often than pages in positions 4-10. Below position 10, citation is rare. AIO does not "discover" you; it picks from sites Google already trusts on the topic.

Structured data (Article + FAQPage + HowTo JSON-LD). Pages with all three schema types are cited roughly 2-3x more than pages with only Article. The FAQPage matters most because the question-answer pairs map cleanly to AIO's synthesis pattern. See the how to get cited by AI engines playbook for the exact schema bundle.

Direct Answer paragraph in the first 120 words. The TldrBox + Direct Answer pattern at the top of this article is what gets lifted. AIO synthesis prefers pre-extracted, self-contained answers it can paraphrase without scrolling.

Question-shaped H2 headers. "How do AI Overviews pick sources" beats "Source selection mechanics." The H2 needs to mirror the user's natural-language query.

Entity disambiguation via sameAs links. Pages on domains with Organization schema linking 4+ matched social profiles (LinkedIn, GitHub, X, Crunchbase) are cited at a higher rate than disambiguation-poor domains. Real-identity signals matter more for AIO than for the chat assistants.

A signal that does not move the needle as much as people think: word count. Cited pages average 1,800-2,400 words, but uncited pages in the same length band exist in equal numbers. Long-form alone is not a citation signal.

A signal that hurts: AI-generated content that fails Google's helpful-content classifier. Per Google's helpful content guidance, pages flagged as low-utility are demoted in classic rank, which mechanically removes them from AIO citation eligibility (since AIO draws from top-10).

The compounding part is what most playbooks underweight. If you have schema but rank position 12, you will rarely be cited. If you rank position 2 but have no Direct Answer paragraph, you will be cited less than the position-3 site that does. The five signals stack; missing one drags the others down.

Use this 12-item checklist to audit any page you want cited in an AI Overview. Aim for 10+ checks before publishing; below 8 is a red flag.

Pre-publish AIO citation readiness checklist:

Article JSON-LD with headline, datePublished, dateModified, author (linked Person entity), publisher (linked Organization entity), and mainEntityOfPage.FAQPage JSON-LD with at least 4 question-answer pairs that exactly match a visible ## FAQ H2 section on the page. Mismatch between schema and visible HTML is a Google-flagged inconsistency.HowTo JSON-LD if the content is procedural. Skip if the article is purely informational.Organization schema with sameAs linking 4+ matched social profiles for the publishing brand.Person schema with sameAs linking the author's LinkedIn (minimum), ideally GitHub or X as well.Drop-in JSON-LD bundle for items 3-4-5-10-11:

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@graph": [

{

"@type": "Article",

"@id": "https://yoursite.com/blog/your-slug#article",

"headline": "Your Headline",

"datePublished": "2026-05-10",

"dateModified": "2026-05-10",

"author": { "@id": "https://yoursite.com/about#person" },

"publisher": { "@id": "https://yoursite.com/#organization" },

"mainEntityOfPage": "https://yoursite.com/blog/your-slug"

},

{

"@type": "FAQPage",

"mainEntity": [

{

"@type": "Question",

"name": "Your visible H2 question 1",

"acceptedAnswer": {

"@type": "Answer",

"text": "Your visible answer 1, matching the H2 prose."

}

}

]

},

{

"@type": "Person",

"@id": "https://yoursite.com/about#person",

"name": "Author Name",

"url": "https://yoursite.com/about",

"sameAs": [

"https://www.linkedin.com/in/author/",

"https://github.com/author"

]

},

{

"@type": "Organization",

"@id": "https://yoursite.com/#organization",

"name": "Your Brand",

"url": "https://yoursite.com",

"sameAs": [

"https://www.linkedin.com/company/yourbrand",

"https://twitter.com/yourbrand",

"https://github.com/yourbrand",

"https://www.crunchbase.com/organization/yourbrand"

]

}

]

}

</script>

Validate against Google's Rich Results test and schema.org validator. Mismatches between the visible H2 FAQ block and the JSON-LD FAQPage are the single most common reason AIO ignores otherwise-eligible pages, per the structured-data error patterns Google documents in their FAQ schema guidelines.

Three citation surfaces, three different rules. Operators who treat them the same waste effort.

| Dimension | Google AI Overviews | ChatGPT (browsing) | Perplexity |

|---|---|---|---|

| Daily query volume (rough) | ~1B+ AIO-eligible (of ~8.5B Google searches, per Statista 2025) | ~1B daily messages, per OpenAI Q4 2025 | ~30-50M daily queries (Q1 2026 estimate) |

| Source allowlist | Narrow (top-10 organic + trust signals) | Wider (live browse + cached) | Widest (3-7 sources per answer, less filtered) |

| Citations per answer | 4-7 | 3-5 typical | 3-7, always shown |

| Footnote click-through rate | ~2-4% | ~3-5% | ~5-8% |

| Referer header on click | Often stripped | Sometimes stripped | Usually preserved |

| GA4 attribution accuracy | ~0% (Direct/none) | ~0% (Direct/none) | ~30-50% (referrer often kept) |

| Best-fit content surface | Informational, procedural | Conversational, exploratory | Research, comparison |

The takeaway: AI Overviews is the smallest absolute traffic surface (since it only fires on 13-15% of SERPs) but it sits inside the largest user funnel (Google itself). ChatGPT has the largest absolute query volume of the three. Perplexity has the highest citation density and the friendliest referrer behavior, which is why per-citation traffic is highest there.

For revenue, the order tends to be: AIO > ChatGPT > Perplexity per cited click on commercial-intent topics, because Google still pre-qualifies users for purchase intent better than the chat assistants do. (Yes, this contradicts the volume numbers; the difference is intent quality, not absolute clicks.) On pure information topics, the order flips toward Perplexity because the user is in research mode and the citation density gives them more reason to click.

The AI traffic revenue attribution breakdown covers the per-engine conversion rates we have seen across client sites in more detail. The short version: do not assume AIO traffic converts like organic Google traffic. It often converts higher on commercial keywords (the user got a partial answer, then clicked through to validate) and lower on informational ones (the user got the full answer in the AIO and left).

Zero-click is the term for a SERP visit that ends without the user clicking through to any source. Pre-AIO, zero-click rates ran around 50% on US Google per Semrush's 2024 zero-click study, driven by Featured Snippets and direct-answer SERP features. Post-AIO, the rate climbed.

The mechanism is mechanical: the AIO block answers the question fully on the SERP. The user has no reason to click. Per Ahrefs CTR data through 2025, informational queries with an AIO block see organic CTR drop from a baseline of ~28% (position 1, no AIO) to ~17% (position 1, AIO present) — roughly a 40% relative drop on the top blue link. Position 2-3 drops are even steeper in relative terms.

The asymmetry: cited sources inside the AIO get a small offsetting click. Footnote CTR runs 2-4% per source, so if you are one of 5 cited sources you may pick up ~3% of total query clicks that you would not have gotten as the position-7 blue link. If you are not cited, you absorb the full CTR drop with no offset.

Concrete worked example for a hypothetical query at 10,000 monthly searches:

No AIO:

Position 1 (you): 28% CTR × 10,000 = 2,800 clicks/month

AIO appears, you cited as footnote 2 of 5, ranked position 1 below:

Footnote click: 3% × 10,000 = 300 clicks/month

Position 1 organic (reduced): 17% × 10,000 = 1,700 clicks/month

Total: 2,000 clicks/month (~29% loss vs no-AIO)

AIO appears, you NOT cited, ranked position 1 below:

Footnote click: 0

Position 1 organic (reduced): 17% × 10,000 = 1,700 clicks/month

Total: 1,700 clicks/month (~39% loss vs no-AIO)

The roughly 10-percentage-point gap between "cited" and "not cited" is the lever. On a $50 RPV (revenue per visitor) page, that gap is $1.5k/month per 10k-volume keyword. Stack that across 20-30 commercial keywords and the work to optimize for AIO citation pays for itself inside a quarter — assuming you can measure it, which is the next problem.

This is the part most playbooks skip. GA4 attributes essentially 0% of AI Overviews referral clicks correctly. Every cited-footnote click lands as Direct/(none).

Three mechanical reasons:

Stripped Referer. Google's AIO block uses link rel attributes and intermediate redirects that result in the destination page receiving an empty Referer header on most browsers. GA4's default channel grouping requires a Referer to classify the source.

No UTM parameters. AIO citation links do not carry utm_source=google_aio or any equivalent campaign tag. There is no programmatic way to bucket the click via the standard GA4 attribution pipeline.

Identical landing-page URL. The cited link is your canonical URL, indistinguishable from a direct paste-in-browser visit or a bookmark click. GA4 has no in-product way to fingerprint the origin.

This compounds with the broader cross-site tracking shutdown, which already evaporates ~30% of paid-search attribution on Safari and Firefox traffic. Combine the two and the GA4 channel report becomes structurally untrustworthy for any traffic that touches AI surfaces.

The fix has to live outside GA4. Server-side first-party tracking that pattern-matches incoming requests against known AIO behaviors (specific User-Agent strings on the pre-fetch, time-of-day patterns, landing-URL signatures) can recover most of it, but it requires custom instrumentation. This is exactly the gap that motivated the GA4 revenue attribution limitations breakdown and our cookieless revenue analytics feature.

A specific honest hedge: even with server-side AIO detection, you cannot recover users who saw the AIO and never clicked at all. Zero-click is genuinely zero-click. The measurement gap there is unfixable; the best you can do is monitor your impression count via Google Search Console and back-calculate the zero-click delta. Search Console reports "Search Appearance: AI Overview" as a separate dimension as of late 2025, which helps for impression-side measurement even though click-side stays broken.

A note on this section: I want to publish numbers from running this measurement on attrifast.com itself. We have not shipped the AIO-detection layer yet. The honest answer here is methodology, not a case study.

Here is the architecture I would (and will) instrument:

Step 1: Server-side AIO referral detection. A server-side handler that inspects every incoming request for AIO-attributed signals. The patterns to watch for:

Step 2: First-party session ID. A 4kb client-side script that drops a first-party cookie or sessionStorage token on the landing page, scoped to your own domain so ITP and Total Cookie Protection do not touch it.

Step 3: Stripe webhook join. A checkout.session.completed webhook handler that joins the session ID back to the original AIO-attributed visit, server-side. No reliance on browser-side cookie persistence over the days-to-weeks Stripe checkout window.

Step 4: Revenue per AIO citation. Aggregate by query and source page; track RPV (revenue per visitor) for AIO-attributed sessions versus Direct, Organic, and other AI engines.

This is the architecture behind Attrifast's UTM-to-revenue tracking and the Stripe-native attribution layer. The AIO-detection rules are a roadmap item, not yet shipped. We will publish our own RPV numbers once we have 90 days of clean data — likely Q3 2026 — and we will not publish synthetic numbers before then.

The reason for the caution: the SaaS analytics niche is full of made-up case-study figures, and the easiest way to lose long-term credibility is to ship a "we drove X% revenue lift from AIO citations" claim with no instrumentation behind it. Per Vincent's GEO playbook, the same caveat applies to AI engines generally: measure first, claim later, and never the reverse.

If you write content for informational or procedural keywords, AIO is now the third-largest answer surface for your work, after classic blue links and ChatGPT browsing mode. The five citation signals (top-10 rank, schema, Direct Answer, question-shaped H2s, entity disambiguation) compound; ship all five or expect mixed results.

If you have not yet thought about AI surface measurement at all, start with Search Console's "Search Appearance: AI Overview" dimension — it is free, it gives you impression-side visibility, and it tells you which of your pages are already cited. Pair it with server-side first-party tracking to close the click-side gap GA4 leaves open.

For the structural GEO playbook (schema, llms.txt, sameAs disambiguation, the FAQ-density mechanics), the how to get cited by AI engines deep-dive covers what works across all three citation surfaces, not just AIO. For the revenue measurement architecture this article gestures at, the cookieless revenue analytics feature page shows the actual server-side join.

A short take, since most readers want a single sentence: ship schema, ship a Direct Answer paragraph, get to top-3 organic on your target queries, and instrument server-side measurement before you start claiming AIO ROI numbers.

SGE (Search Generative Experience) was the labs-stage prototype Google ran from May 2023 through early 2024. AI Overviews is the production successor, launched broadly in May 2024 and expanded through 2025-2026. The mechanics are similar (LLM-generated answer at the top of the SERP with linked sources), but AI Overviews ships to the default SERP with no opt-in, draws from a narrower 'trusted source' allowlist than SGE did, and appears on roughly 13-15% of US English queries as of Q1 2026 per Search Engine Land tracking.

Roughly 13-15% of US English Google SERPs in Q1 2026, with heavy skew by query class. Informational queries trigger AI Overviews around 40% of the time, procedural 'how to' queries above 50%, YMYL (medical, legal, financial) only 5-8%, and transactional or branded queries under 3%. Mobile triggers slightly more often than desktop. The appearance rate has crept up from roughly 7% at launch in mid-2024.

Five signals move the needle: existing top-10 organic ranking for the query, structured data (Article, FAQPage, HowTo JSON-LD), question-shaped H2 headers that match conversational queries, a Direct Answer paragraph under 120 words near the top of the page, and entity disambiguation via sameAs links. Pages already ranking in positions 1-3 are cited roughly 4 times more often than pages in positions 4-10, per Semrush AIO research. Schema and Direct Answer matter more for the long tail.

Because AI Overviews citation clicks land without a conventional referrer. Google strips the Referer header on most outbound AIO clicks, and the destination URL has no UTM parameters. GA4 sees a session with no referrer and no campaign tags, so it buckets it as Direct/(none) by default. There is no in-GA4 fix. Server-side first-party tracking that detects AIO referral patterns (User-Agent hints, landing-page URL signatures, time-of-day patterns) recovers most of it but requires custom instrumentation.

Per Ahrefs 2025 click-through-rate research, organic blue-link CTR drops roughly 30-40% on informational queries when an AI Overview appears, and 10-15% on commercial-intent queries. The cited footnotes inside the AIO block earn an estimated 2-4% click-through on their own. Net effect: if you are cited, you recover some traffic; if you rank below the AIO and are not cited, you absorb the full CTR hit. The asymmetry is why citation is now a measurable revenue lever, not a vanity metric.

Discover which marketing channels bring customers so you can grow your business, fast.

Start free trial →5-day free trial · $29/mo · cancel anytime