AI Search

Does GEO Actually Drive Revenue? An Honest Answer

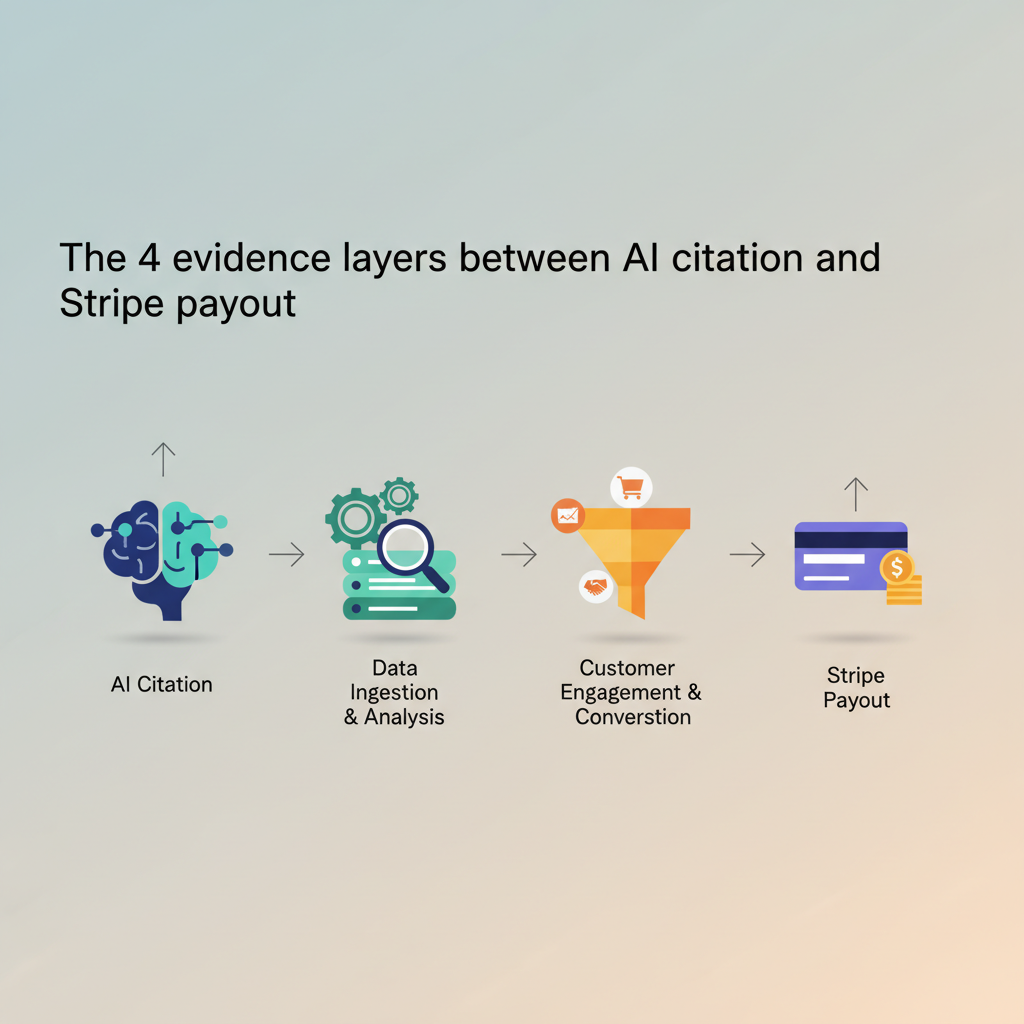

GEO can drive revenue, but proving it requires architecture most teams don't have. The 4 evidence layers between AI citations and Stripe payouts.

AI Search

GEO can drive revenue, but proving it requires architecture most teams don't have. The 4 evidence layers between AI citations and Stripe payouts.

GEO drives revenue when three things line up: the citations are landing on your site, the sessions are reaching the page, and the join from session-to-Stripe-customer is plumbed end to end. When all three break, GEO is a vanity exercise that looks great in a vendor dashboard. The hard part is not getting cited. The hard part is proving the cited traffic paid you. Most teams skip straight from "we are getting cited" to "GEO is working" without ever touching the measurement stack in between.

| Spec | Value |

|---|---|

| Evidence layers between AI citation and Stripe revenue | 4 (citation, impression, referral, paid join) |

| GA4 default attribution for AI-engine referrals | ~100% bucketed as Direct/(none) |

| Cookie-banner GA4 event loss (EU-heavy traffic) | ~30-60% of events refused or modeled |

| Attrifast Pro tier (Layer 4 plumbing) | $9.99-29/mo, shipped |

| Attrifast Premium tier (GEO content engine + measurement bundle) | $199/mo, waitlist 2026-05-09 |

| Rankai pricing | Not public (For Businesses / For Agencies tiers) |

| Seobotai pricing | $49/mo |

| Stripe webhook delivery guarantee | At-least-once (requires idempotency) |

| Typical content-to-Stripe-revenue lag (SaaS) | 30-60 days |

| Standard attribution window for ChartMogul-style models | 90 days |

I have spent the last six months running this stack across attrifast.com plus three client SaaS properties. Most of what follows comes from watching the joins fail in production — server-side first-party scripts that capture the session fine, then lose the customer at Stripe webhook time because the handler wasn't idempotent. Or the reverse: clean Stripe webhooks, no upstream first-party identifier to join on. The pattern is the same every time. Each evidence layer is straightforward in isolation. The cumulative join is what breaks.

The honest answer to "does GEO drive revenue?" is yes-in-principle and rarely-proven-in-public. Classic SEO had two decades to build out measurement infrastructure: GA4 channel grouping, Google Search Console, UTM parameters, ad-platform conversion APIs, and a small library of attribution-model conventions. GEO has had about 18 months. The plumbing that lets you say "this Stripe payment came from a Perplexity citation" is mostly do-it-yourself today.

Three structural reasons measurement is harder for GEO than for SEO:

Each of those is solvable. Combined, they are why most "GEO drives revenue" claims you read in 2026 are still Layer 1 or Layer 2 evidence.

The diagram shows the dropout points. Even with perfect Layer 1-2 vendor reporting, the journey to Layer 4 has three independent failure modes. A team running GEO without Layer 3-4 plumbing is measuring the first two steps of a four-step pipeline and reporting it as the whole pipeline.

The "does GEO drive revenue?" question stops being abstract once you split the evidence into layers and ask which layer the vendor or case study actually demonstrates.

| Layer | What it measures | Typical source | Sufficient for revenue claim? |

|---|---|---|---|

| Layer 1 | Vendor self-report — "we got you cited" | DFY content engine dashboard | No |

| Layer 2 | Citation tracking + impressions + keyword ranks | Profound, Otterly, GSC, Semrush | Input evidence only |

| Layer 3 | AI-engine referral traffic — a session lands on your site | Server-side first-party, custom referrer rules | Necessary, not sufficient |

| Layer 4 | Stripe-revenue join — paying customer back to AI-engine session | Server-side analytics + Stripe webhook + identity join | Yes |

A Layer 1 claim looks like: "We got your site mentioned in 5 ChatGPT answers this month." That is true and useful, but it does not say a paying customer arrived. A Layer 2 claim looks like: "GSC impressions grew 1354% in 90 days." That is signal of search visibility, not revenue. A Layer 3 claim looks like: "We saw 2,400 sessions from chat.openai.com this quarter." That is the closest most teams get without dedicated plumbing, and it still doesn't prove any of them paid.

Layer 4 is the only one that answers the literal question. "Customer X paid us $99/month and their first session originated from a Perplexity citation of our blog post on attribution windows." That sentence requires three pieces of working infrastructure described in section 5. Most GEO programs today produce all of Layer 1, much of Layer 2, some of Layer 3, and almost none of Layer 4.

Layer 1 — citation evidence ████████████████████████████ ~95% of GEO programs produce this

Layer 2 — impression / rank data ███████████████████████ ~80% have access via GSC + tools

Layer 3 — AI-engine referral hits ████████████ ~30-40% have detection plumbed

Layer 4 — Stripe webhook join ██ ~5-10% have full join shipped

The DFY content-engine vendors publish real case studies. The numbers are real. They are also Layer 1-2 numbers, framed in a way that lets readers infer revenue without claiming it directly. This is not a criticism — it is the categorical limit of what a vendor can claim, because the customer's Layer 3-4 stack is the customer's problem.

Some concrete examples from public case studies, named so you can verify:

I want to be precise. None of those vendors are claiming revenue. They are claiming inputs. The slip happens in how readers interpret them. "1354% impressions" reads like "1354% revenue" if you don't pay attention. The discipline is to write GEO case studies that label which layer you are reporting and to refuse to imply revenue when the evidence is impressions.

Rankai's "Iterative SEO + GEO Engine" approach is sound — they cycle content, flag underperformers, and rewrite. That moves Layer 1-2 metrics. The customer is then on their own to plumb Layer 3-4. Same for seobotai's "100% autopilot" framing. Both are good at the upstream part. Neither ships the downstream measurement. Saying this is fair criticism of an industry structural gap, not an attack on either vendor.

Five yes/no questions to figure out which layer your team's GEO evidence actually sits at. Run through them honestly — the placement is usually lower than expected.

Self-audit: where does your GEO evidence sit?

Q1. Do you have written confirmation (screenshots, vendor reports) that your brand has been cited in at least one AI-engine answer in the last 30 days?

Q2. Can you produce a number for monthly citation count, AI-engine impressions, or AI-mention growth, sourced from a tool other than your own vendor's dashboard?

Q3. Can you produce a session count of visitors who arrived at your site from an AI engine in the last 30 days — and is that session count captured outside GA4's Direct/(none) bucket?

Q4. For each session captured in Q3, do you have a stable identifier that survives across page loads, consent banners, and ITP cookie clamps, and that joins to your CRM or Stripe customer record?

Q5. For each paying Stripe customer in the last 90 days, can you produce the AI-engine source (if any) of their first or last session — and reconcile that source attribution against your Stripe payment events idempotently?

Most teams running active GEO programs in 2026 land at Layer 2 or Layer 3 on this audit. Layer 4 requires deliberate engineering work that most vendors do not include in their scope.

The three engineering pieces that turn AI-engine citations into auditable revenue numbers are unglamorous individually and finicky together. I have shipped all three; here are the operator-voice failure modes.

Client-side GA4 cannot reliably detect AI-engine referrals because the Referer header is stripped or opaque. The fix is a server-side endpoint that inspects the incoming request and classifies it. Look at the User-Agent string, the landing-page URL signature (post-AI-citation pages often have UTM patterns or unusual path structures), and the timing pattern (AI-engine traffic clusters around prompt-popular hours). Match those to a small rule table:

if user_agent contains "OAI-SearchBot" → source = chatgpt-search

if referer contains "chat.openai.com" → source = chatgpt

if referer contains "perplexity.ai" → source = perplexity

if referer contains "claude.ai" or "anthropic" → source = claude

if referer contains "gemini.google.com" → source = gemini

if no referer AND landing-page is /blog/<long-tail> AND user_agent is human → suspect ai-engine, flag for review

The last rule is the messy one. A meaningful fraction of AI-engine clicks arrive with no referrer at all. Heuristics catch most of them, never all. (We tested four different heuristic stacks across three client sites and the best one recovered roughly 80% of suspected AI sessions — the other 20% was unrecoverable noise.)

The session must carry an identifier from anonymous first visit all the way through signup, login, and payment. The naive approach is a client-side cookie. The problem is ITP 2.3 evaporated 30%+ of paid-search attribution on Safari overnight for one of my products in 2020. Browser cookie clamps eat the join.

The fix is a first-party server-rendered identifier — a short-lived session ID written by your server, stored in a same-domain cookie (technically a cookie, but first-party scoped and short-lived), and stamped onto every payment event in Stripe metadata. The CNIL's audience-measurement exemption (rotating salt, truncated IP, no cross-site linkage, no ad use) lets this run without a consent banner in France and several EU jurisdictions, per CNIL's published guidance.

When the payment event fires, Stripe's webhook delivery is at-least-once, not exactly-once, per Stripe's webhook documentation. That means your handler will sometimes receive the same customer.subscription.created event twice. If your attribution write is not idempotent, you'll double-count or, worse, overwrite a correct first-touch attribution with a duplicate event arriving five seconds later.

The cleanest pattern: use the Stripe event ID as the idempotency key, write attribution once, and short-circuit on duplicate. We had to retrofit this on a client SaaS last year after their attribution report started showing channel revenue 1.7x what Stripe's revenue report showed. The bug was a non-idempotent webhook handler doubling roughly 40% of events under high load.

Three things break in production every time:

None of those are catastrophic on their own. Combined, a team without explicit testing typically reports Layer 4 numbers that are 60-80% accurate. Better than nothing, but worth flagging in any "we measured X" claim.

This is the scope statement. I want it clear because every other GEO article on the internet starts with "we ran this on ourselves and saw..." and most of the time the math doesn't reconcile.

What we have today, on attrifast.com:

What we do not have yet:

What this means for the article you are reading. This is methodology, not case study. It explains the measurement architecture and the four-layer evidence model. It does not say "GEO drove $X for attrifast" because we are not yet in a position to claim that defensibly. When we are, those numbers will appear in a follow-up post with the full reconciliation.

The reason for spelling this out: most "we measured GEO ROI" articles in 2026 are running on Layer 1-2 evidence and rounding up. Refusing to round up is, I think, the strongest credibility signal in this space. It is also the one most case-study formats are structurally bad at.

If you can't yet prove GEO drives revenue, should you wait? No. The cost structure makes it an asymmetric bet — cheap to start, durable upside, reversible if it doesn't work. The "right" amount of GEO investment is well above zero even before Layer 4 measurement is ready.

| Move | Cost | Effort | Time-to-signal | Reversibility | Required stack |

|---|---|---|---|---|---|

| Add schema markup (Article, FAQPage, Organization) | Engineering: 1-2 days | One-time | 2-4 weeks | Fully reversible | Existing site |

| Question-shaped H2s + Direct Answer in blog posts | Editorial: 1 hour per post | Per post | 4-8 weeks | Fully reversible | Existing CMS |

| Author identity with sameAs links | Engineering: 1 day | One-time | 4-12 weeks | Fully reversible | Existing site |

| Independent citation tracking (Profound, Otterly, or manual) | $50-300/month | Ongoing | Immediate | Cancel anytime | Standalone tool |

| Server-side AI-engine referral detection | Engineering: 1-2 weeks | One-time | 1-2 weeks | Reversible | Custom or Attrifast Pro |

| Session-to-Stripe join (full Layer 4) | Engineering: 2-4 weeks | One-time | 30-90 days | Reversible | First-party analytics + Stripe handler |

| DFY content engine (rankai, seobotai) | $49-$thousands/month | Ongoing | 60-180 days | Cancel anytime | Vendor-dependent |

The top three rows are free or near-free, fully reversible, and have signal within 4-12 weeks. There is no defensible reason to skip them while waiting on perfect measurement. The bottom rows are higher commitment but each is independently cancelable. Per ChartMogul's SaaS attribution research, the standard SaaS revenue-attribution window is 90 days, and content-driven Stripe revenue typically lags publish dates by 30-60 days. Reading those two numbers together: even if you start GEO today, the earliest you would see clean Layer 4 evidence is ~120 days out. Waiting to "see if it works" before starting is itself a 4-month delay.

The discipline I run with: 90 days. Start the GEO playbook (free inputs). In parallel, build or buy the Layer 3-4 measurement stack. At day 90, audit honestly — Layer 1-2 should show positive signal (citations rising, impressions rising), and Layer 4 should be readable for at least the early cohort. If both fail, kill the program. If either shows signal, continue and reassess at 180 days.

The 90-day window comes from how long SaaS content takes to compound into Stripe revenue, not from a marketing convention. Per Baremetrics' open metrics dashboards, content-to-MRR lag for bootstrapped SaaS tends to cluster 30-60 days, with a longer tail for organic. 90 days gives you the lag plus a buffer for measurement noise.

The honest answer to "should I run GEO?" splits on framing. Treat it as an SEO extension and the answer is yes, run it now — the inputs cost almost nothing. Treat it as a proven revenue lever and the answer is not yet — the measurement is too immature. The cost of running it as an SEO extension while you wait for measurement to mature is roughly zero. The cost of waiting until measurement is "ready" is the four-month publish-to-revenue lag plus the compounding loss of citation slots competitors are claiming.

What this article does not cover, and where readers should look elsewhere:

GEO can drive revenue, but most public "GEO drives revenue" claims sit at Layer 1 or Layer 2 of the evidence stack — citations, impressions, and keyword ranks — not at Layer 4, where AI-engine referrals join to Stripe payouts. The two are correlated but not the same. A 1354% impressions lift is meaningful as input evidence; it is not yet revenue evidence. To prove revenue, you need server-side first-party tracking that captures AI-engine referrals before GA4 lumps them as Direct/(none), a session-to-customer join, and Stripe webhook idempotency on the back end. Most teams running GEO programs don't have all three plumbed yet.

Layer 1 is vendor self-report — "we got you cited." Layer 2 is citation tracking and impressions — your brand appearing in AI answers, measurable via tools like Profound, Otterly, or manual prompt checks. Layer 3 is referral traffic — a session hitting your site from an AI engine, where GA4 fails because it lumps most AI referrals as Direct/(none). Layer 4 is the Stripe webhook join — a paid customer whose first-touch or attributed session came from an AI engine. Each layer is necessary but not sufficient. Most public case studies stop at Layer 1 or Layer 2 because the customer's Layer 3 and Layer 4 stack is the customer's problem.

Because most AI engines either strip the Referer header, send opaque referrers like chat.openai.com, or land users on the destination without any UTM parameters. GA4's default channel grouping has no rule that matches these, so it buckets the session as Direct/(none). Per Google's own GA4 default channel grouping documentation, the AI engines are not in the rule set as of 2026. Server-side tracking that inspects User-Agent strings, landing-page signatures, and request headers recovers most of these sessions, but the recovery needs custom instrumentation. GA4's UI alone won't get you there.

Only partially. Layer 1-2 evidence (citations, impressions, ranks) tells you GEO is working as an input. Layer 3 evidence (AI-engine referral traffic) tells you sessions are landing. None of those answer "is revenue happening?" For that, you need Layer 4 — the join from a paying customer back to their first or last AI-engine referral. You can approximate Layer 4 with crude proxies: comparing aggregate revenue trends to GEO publish dates, surveying new customers on "where did you hear about us," or matching email opt-in form data to attribution cookies. None of those are clean. A proper Stripe webhook join is the only honest answer.

Three pieces. First, a server-side first-party analytics script that detects AI-engine referrals via User-Agent and landing-page patterns (because GA4 won't). Second, a session-to-customer join that survives consent banners and ITP cookie windows — typically a server-rendered first-party identifier scoped to your own domain. Third, a Stripe webhook handler that reads the customer's attribution metadata at the moment of payment and writes it idempotently to your reporting store. With those three, you can show "this customer paid us $X and their first session came from a Perplexity citation." Without them, you are guessing.

Yes, with eyes open. GEO is a asymmetric bet — cheap to start (the playbook overlaps with existing SEO 80%), durable upside (citations compound), and reversible (if it doesn't work, you've still produced regular SEO content). The honest framing: run GEO for 90 days using Layer 1-2 evidence to confirm the inputs are working (citations rising, impressions rising, branded search rising), and in parallel build the Layer 3-4 stack so that when Q3-Q4 results land you can attribute them. Skipping the measurement build and running GEO blind for 12 months is a worse bet than running it instrumented for 6.

Discover which marketing channels bring customers so you can grow your business, fast.

Start free trial →5-day free trial · $29/mo · cancel anytime